ClickAIXR: On-Device Multimodal Vision-Language Interaction with Real-World Objects in Extended Reality

Authors

Dawar Khan, Alexandre Kouyoumdjian, Xinyu Liu, Omar Mena, Dominik Engel, Ivan ViolaDescription

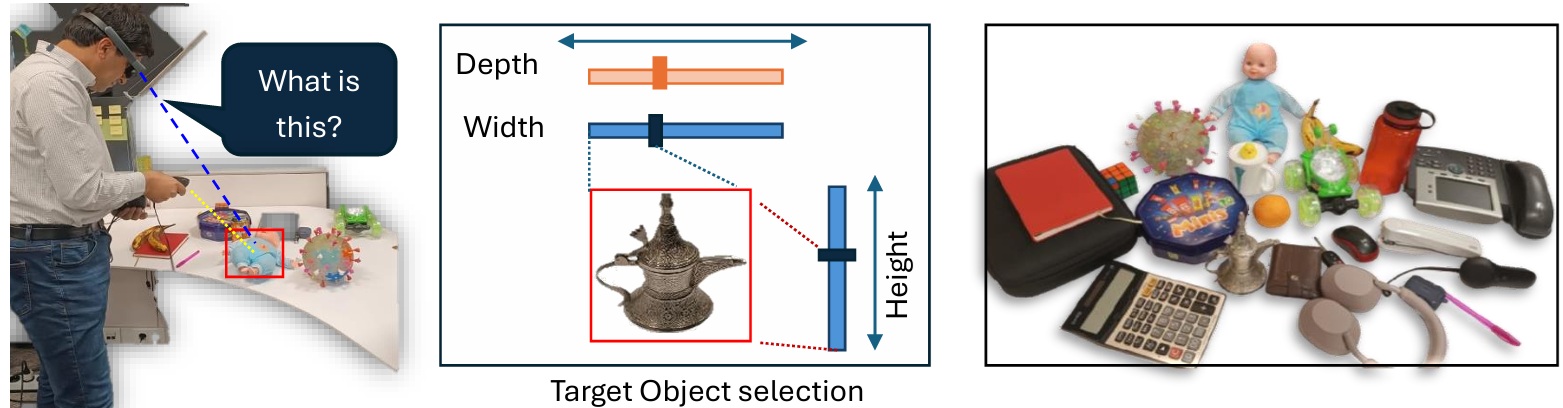

ClickAIXR is a novel on-device framework for multimodal vision-language interaction with real-world objects in Extended Reality (XR). Unlike prior systems that rely on cloud-based AI or gaze-based selection, ClickAIXR integrates an on-device Vision-Language Model (VLM) with a controller-based object selection mechanism. This enables users to precisely select objects in XR and ask natural language questions, receiving responses through both text and speech. By performing all inference locally, ClickAIXR reduces latency, preserves privacy, and improves transparency. The object-centered interaction also reduces ambiguity compared to gaze- or voice-only interfaces. The system is implemented using the Magic Leap SDK (C API) with ONNX-based local VLM inference. A user study comparing ClickAIXR with Gemini 2.5 Flash and ChatGPT 5 shows moderate latency and a positive user experience.