Nanotilus: Generator of Immersive Guided-Tours in Crowded 3D Environments

Authors

Ruwayda Alharbi, Ondrej Strnad, Laura Luidolt, Manuela Waldner, David Kouril, Ciril Bohak Tobias Klein, Eduard Gröller Ivan ViolaDescription

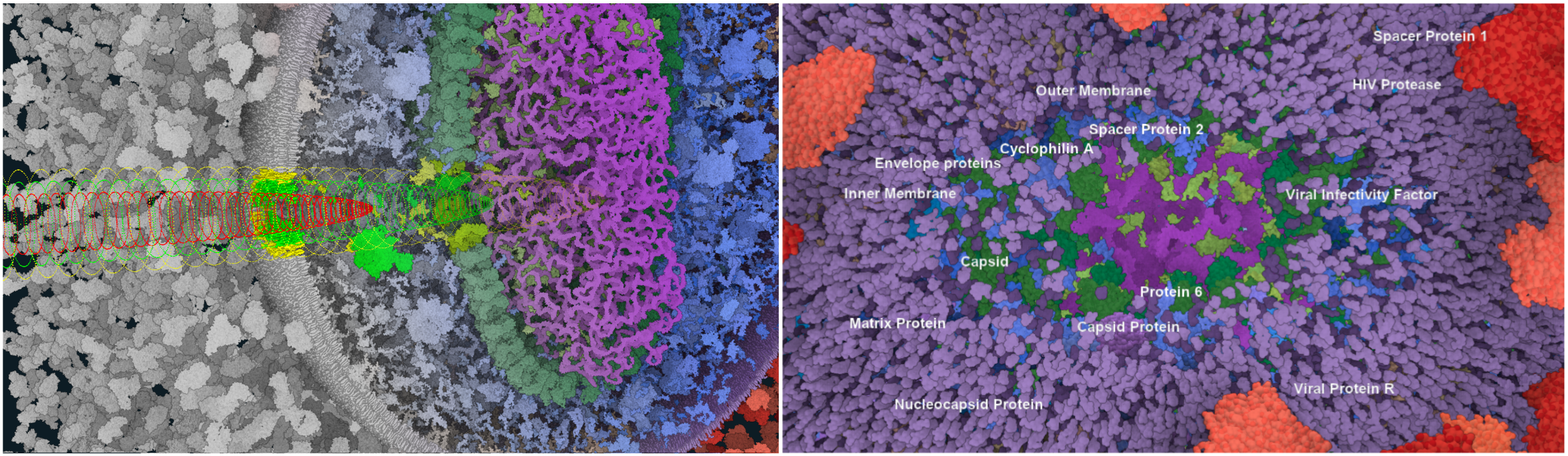

This paper proposes Nanotilus -- a new visibility and guidance approach for very dense environments that generates an endoscopic inside-out experience instead of outside-in viewing, preserving the immersive aspect of virtual reality. The approach consists of two novel, tightly coupled mechanisms that control scene sparsification simultaneously with camera path planning. The sparsification strategy is localized around the camera and is realized as a multi-scale, multi-shell, variety-preserving technique. When Nanotilus dives into the structures to capture internal details residing on multiple scales, it guides the camera using depth-based path planning. In addition to sparsification and path planning, we complete the tour generation with an animation controller, textual annotation, and text-to-visualization conversion. We demonstrate the generated guided tours on mesoscopic biological models -- SARS-CoV-2 and HIV. We evaluate the Nanotilus experience with a baseline outside-in sparsification and navigational technique in a formal user study with 29 participants. While users can maintain a better overview using the outside-in sparsification, the study confirms our hypothesis that Nanotilus leads to stronger engagement and immersion.

Sources

- Video - Nanotilus framework

- Video - Sample A ( SARS-COV-2 in blood plasma dataset)

- Video - Sample B ( HIV in blood plasma dataset)